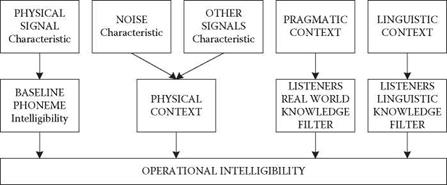

Many factors contribute to the operational intelligibility of speech. Simpson et al. (1985) proposed a model for operation intelligibility (Figure 3.16). It is important to note that intelligibility and the characteristics of human speech are not necessarily correlated. A radio announcer may sound natural despite a background of static noise but may have low intelligibility for the listener. Conversely, synthesised speech warning messages in an aircraft cockpit may sound mechanical, but pilots consider them to be more intelligible than ‘live’ messages received over the aircraft radio.

Comprehensive design guidelines in this area are still very limited, but some general points can be made. Although the ergonomics/human factors literature includes reports of research, which supports certain principles of speech design, this knowledge has not yet been formulated into design guidelines. Human factors methodology is sufficiently well developed to permit comparison of task-specific speech systems experimentally, but the tools required for producing generic design guidelines for speech systems are not yet available. In the short term, simulation of speech system capabilities in conjunction with the development of improved system performance should prove a productive methodology to achieve these aims.

In general, speech generation algorithms seem to be more advanced than speech recognition algorithms. Reasonably intelligible text-to-speech algorithms from

|

Intelligibility Enabling Factors

FIGURE 3.16 Factors that contribute to operational intelligibility. (Simpson et al., 1985. Redrawn from Human Factors, 23, p. 131. © The Human Factors Society, Inc. With permission.) |

Standard English spelling are now available commercially. The recognition counterpart, speech-to-text algorithms, is also available in the form of dictation systems. These different available systems seem to be heavily user-dependent. Some people regard the quality as very high while for others it is unacceptably low. Systems transferring speech into control actions have a higher reliability, particularly if the expected vocabulary is limited. For example, in an emergency, when the operator shouts ‘Stop generator!’ the system will recognise the instruction and act accordingly.

In the short-term, the current recognition algorithms appear adequate for use in favourable environments characterised by low to moderate noise levels, for applications that only require small vocabularies and that do not place the operator under stress. Great caution must be exercised with the use of current technology in stressful situations.

Speech generation algorithms, on the other hand, have demonstrated acceptable performance even under conditions of severe noise and high workload. This technology is sufficiently advanced to be applied appropriately, with careful attention being paid to the integration of ergonomics. Already by 1980, Simpson and Williams had studied the use of synthesised voice for cockpit warnings. They concluded that voice warnings do not need to be preceded by an alerting tone, as the quality of the spoken language makes the warning readily understandable by the listener.